Benchmark Datasets

Explore the curated prompts and datasets used to evaluate model responses, with notes on setup, reproducibility, and evaluation metrics for researchers.

About

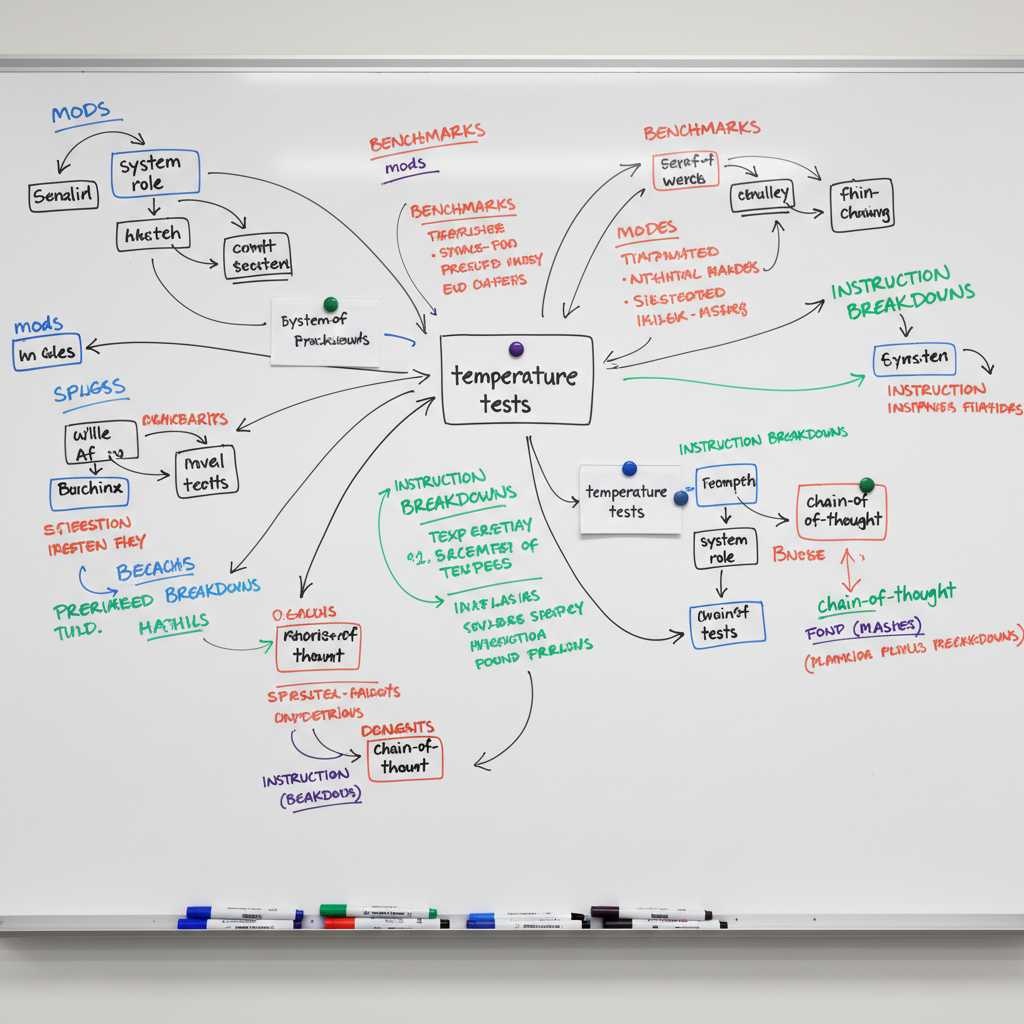

Prompts Benchmarking

This notebook outlines benchmarking philosophy, curated datasets, and evaluation criteria for prompts and models, enabling consistent comparison and iterative improvement across experiments.